The Client’s Problem in an AI Search World

By 2025 the search landscape had split in two. Traditional SEO still mattered for rankings and click‑throughs, yet generative engines such as Google’s AI Overviews, ChatGPT, Bing Copilot and Perplexity were becoming gatekeepers in their own right. Research from Forbes calls this the citation economy, where visibility is paid out in mentions inside AI answers instead of clicks. A study by SE Ranking further notes that companies can rank well on Google yet remain invisible in AI responses. Against this backdrop, an established B2B client in a competitive, information‑heavy category approached AIBoost Marketing with a paradox: they enjoyed high rankings and steady organic traffic, but AI search engines never mentioned them.

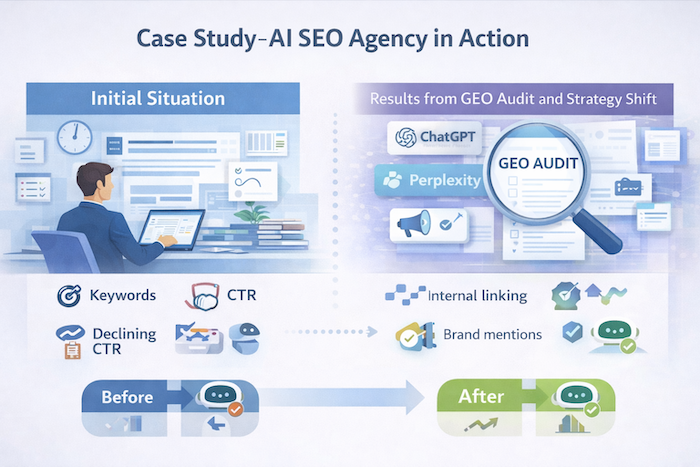

The client’s site was well‑optimised: technical audits had been completed, keywords targeted and backlinks acquired. However, when their C‑suite searched for their brand on ChatGPT, Bing Copilot and Google SGE, competitors appeared while they did not. Leadership was sceptical about whether AI search affected revenue, but the decline in click‑through rate (CTR) hinted that zero‑click answers were siphoning attention. This case study examines how AIBoost Marketing’s generative engine optimisation (GEO) programme transformed the client’s visibility and what broader lessons it reveals about AI SEO agencies.

Initial Situation Before Engagement

- Industry context: The client operated in a highly competitive B2B software niche where buyers conducted extensive research. The market was saturated with long‑form guides, comparison tables and analyst reports.

- SEO metrics: Organic traffic and rankings were stable. The website consistently held top‑three positions for core keywords and maintained strong domain authority. Nevertheless, Google Search Console reported declining CTR even as impressions grew—a common pattern in zero‑click searches.

- AI visibility: Manual tests across ChatGPT, Bing Copilot and Google SGE yielded no mentions of the client. When asked industry questions, these engines cited competitors, review sites and analyst reports. Leadership asked: “Is AI search actually impacting us?” Without data, the answer was unclear.

The AIBoost Marketing GEO Audit

AIBoost began with a GEO audit combining manual and automated tests:

- Prompt research: Using SE Ranking’s AI Search Toolkit and custom scripts, the team assembled a list of questions buyers ask generative engines. This mirrored the approach described in successful GEO campaigns where agencies researched prompts and sources before optimising.

- Platform testing: Each question was submitted to ChatGPT (with browsing), Bing Copilot and Google AI Overviews. The team recorded which sources were cited, whether the client appeared and how often competitors were mentioned.

- Visibility scoring: For each prompt the team assigned scores for presence (0/1), citation position and source type. They discovered that the client was never selected as a source—AI answers consistently cited competitors or third‑party sites. This reinforced Forbes’ observation that retrieval is the gatekeeper: if content isn’t retrieved, it cannot be cited.

Key Issues Identified

Content written for ranking, not for answer extraction

The audit revealed that content targeted keywords but buried key facts in long paragraphs, making it hard for AI models to extract answers. Forbes notes that retrieval favours clarity and scannability; pages that bury key facts often underperform. Our client’s pages opened with marketing fluff rather than concise definitions or summaries, so generative engines skipped them.

Lack of clear definitions or quotable summaries

Unlike AEO‑optimised content with short, direct answers, the site lacked explicit definitions or bullet‑point summaries. AI engines prefer content that can be quoted verbatim. Without answer blocks, the client’s insights were never selected.

Weak internal linking

Topics were scattered across siloed pages without a clear hierarchy. AI models rely on entity clarity and topical authority. Without strong internal linking, the site did not signal which pages were authoritative on each subject.

Minimal structured data

The site used basic schema (e.g., breadcrumb lists) but lacked FAQPage, HowTo and Article markup. Advanced schema is crucial for generative engines to parse content.

Limited external authority signals

SE Ranking case studies show that AI models often favour earned media and authoritative third‑party sources. While our client had backlinks, they lacked brand mentions in trusted directories, review sites and industry round‑ups. This reduced their credibility in AI answers.

Strategic Shift Proposed by AIBoost Marketing

After presenting the audit findings, AIBoost proposed a shift from “SEO pages” to answer‑first content architecture. The strategy aligned with the generative engine optimisation framework emphasised by Forbes and Lasso Up: treat AI engines as readers, not just crawlers, and focus on being referenced rather than merely visited. The plan included:

- Content restructuring – rewriting key pages with fact‑dense intros and clearly labelled sections.

- Entity and terminology consistency – ensuring uniform naming conventions across pages to strengthen entity clarity.

- Technical enhancements – implementing advanced schema and improving internal linking.

- Authority building – earning citations from trusted external sources and aligning messaging with neutral, explanatory language that AI prefers.

Actions Taken: Content Restructuring for AI

- Answer‑first writing: AIBoost rewrote high‑priority pages to lead with a concise summary or definition—often a one‑sentence answer followed by a bullet list. This mirrored the inverse pyramid structure recommended for AI SEO.

- Sectioning for extraction: Long paragraphs were broken into subsections with descriptive H2/H3 headings that matched common questions. This approach was inspired by AEO tactics to structure content for direct answers.

- Terminology alignment: All pages adopted consistent terms for products, features and industry concepts. Entities were defined once and linked internally to an authoritative glossary page, reinforcing topical authority.

- FAQs and how‑tos: The team introduced dedicated FAQ sections addressing specific buyer questions and implemented FAQPage schema. For procedural topics, HowTo schema was added. Arc Intermedia notes that schema markup and Q&A formatting help AI engines extract and present answers.

Technical and Structural Improvements

- Advanced schema: Article, FAQPage and HowTo structured data were added site‑wide. The team also implemented Product and Review schema on product pages where relevant.

- Internal linking overhaul: Using a pillar‑cluster model, pages were grouped around core topics with clear parent–child relationships. This created a topical hierarchy that AI models could interpret and encouraged deeper crawlability.

- Crawling controls: Robots.txt was audited to ensure AI crawlers had access to critical pages while excluding irrelevant resources. Canonical tags were standardised, and duplicate content consolidated.

- Content extraction readiness: HTML markup was cleaned to remove nested divs and unnecessary scripts. Each section included clear headings, lists and tables for easier tokenisation by retrieval pipelines.

Authority and Trust Reinforcement

- External mentions: AIBoost researched where competitors were cited (e.g., industry directories, analyst reports, G2/Capterra). The client then pursued similar listings and contributed to third‑party round‑ups. Forbes reports that earned media improves citation odds because AI systems prefer independent sources.

- Thought leadership: Subject‑matter experts wrote guest articles and were interviewed on podcasts, generating high‑quality backlinks and mentions. These pieces were neutrally written to align with AI’s preference for factual, balanced language.

- Schema for reviews: For product pages, the team implemented Review schema with aggregate ratings. This provided trust signals for AI systems that synthesise product recommendations.

AI Visibility Monitoring

Following implementation, AIBoost established a monitoring routine:

- Prompt tracking: A list of high‑value prompts was tested weekly across ChatGPT, Bing Copilot, Google AI Overviews, Perplexity and Claude. Results were logged in a dashboard that tracked presence, citation position and narrative accuracy.

- Share‑of‑voice metrics: The team measured the frequency of the client’s citations versus competitors. This mirrored the measurement shift from traffic to representation described by Forbes.

- Consistency checks: The narrative of AI answers was analysed for correctness; hallucinations or misrepresentations were reported as defects to be fixed in content or external sources.

Early Results Observed

Within three months, AIBoost noted several improvements:

- First AI citations: The client began appearing as a supporting source in Google AI Overviews for key product comparisons and industry definitions. ChatGPT and Perplexity occasionally mentioned the brand when answering broad informational queries. These early citations mirrored case studies where brands achieved initial AI visibility after prompt‑driven content updates.

- Consistent inclusion: The frequency of citations increased gradually. For core queries, the brand moved from absent to being mentioned in roughly one in five AI responses. This aligned with SE Ranking case results where brands improved AI visibility by 36% after similar optimisations.

- No traffic spike—but no drop: Organic traffic remained steady despite lower CTR, demonstrating that AI visibility did not cannibalise existing traffic. This echoes Hedges & Company’s finding that engagement metrics improve even if average session time drops when visitors find answers more quickly.

Downstream Business Signals

- Rise in branded searches: Google Search Console showed a modest increase in branded queries, suggesting heightened awareness.

- High‑intent visits: Users arriving via AI citation links spent more time on high‑value pages and converted at higher rates, mirroring Hedges & Company’s observation that AI referral traffic drives higher engagement.

- Sales conversations referencing AI: Sales teams reported prospects mentioning the brand’s presence in AI answers (e.g., “I saw you mentioned in ChatGPT’s summary”). While anecdotal, this indicated that AI visibility shaped buyer perceptions.

- Steady traffic: Despite a zero‑click environment, there was no decline in organic traffic, confirming that AI optimisation complemented rather than cannibalised SEO efforts.

What Changed Strategically

The engagement triggered a mindset shift across the organisation:

- Rankings to influence: Leadership moved away from obsessing over positions and embraced the idea of influencing AI narratives. They recognised that, as Alterian notes, brands now compete for relevance and inclusion within AI‑generated answer sets rather than rank alone.

- Answer‑first standards: The content team adopted answer‑first writing guidelines, using short summaries, bulleted lists and clear headings. Editors were trained to anticipate AI questions and to write neutrally.

- Confidence in measurement: With a monitoring framework in place, the team could see tangible evidence of AI citations. This built confidence that AI search could be influenced and measured—echoing the SE Ranking report that real‑world campaigns drive traffic and revenue when optimised for LLMs.

Key Lessons from the Engagement

- AI visibility is cumulative, not instant: Citations accrue over time as AI models retrain or refresh their indexes. Early mentions are often sporadic, but consistency improves with continued optimisation.

- Structure and clarity beat volume: Short, answer‑oriented sections with clear headings and schema outperformed verbose, keyword‑stuffed pages. Retrieval pipelines favour clarity and scannability.

- SEO foundations remain essential: Crawlability, indexing and authority signals must be in place. GEO did not replace SEO—it built on it, confirming that SEO remains necessary but insufficient for AI dominance.

- Neutral language matters: AI systems favour balanced, evidence‑based content over promotional copy. Aligning tone with AI preferences increases citation likelihood.

- Measurement expands beyond clicks: New KPIs—citation frequency, share of voice and narrative accuracy—provide insights that traditional SEO metrics cannot.

Why This Case Matters

This case study demonstrates that AI SEO is not theoretical. It shows that a brand with solid SEO foundations can still be invisible in AI search—and that the invisibility is often structural rather than reputational. By adopting answer‑first content, adding structured data, strengthening internal linking and earning external citations, the client gained presence within AI answers without sacrificing traditional rankings or traffic.

Real‑world case studies documented by SE Ranking and Hedges & Company mirror these outcomes. SE Ranking’s campaigns achieved improvements in AI visibility, revenue and leads through prompt‑research, structured content and authority building. Hedges & Company’s auto parts case studies reported AI referral traffic growing from a few visits to 300 per month after implementing content and schema optimisations, with engagement metrics consistently higher than organic traffic. These results underscore that optimising for AI search delivers measurable, business‑relevant benefits.

Conclusion: What This Says About AI SEO Agencies

AI SEO agencies like AIBoost operate at the intersection of technical SEO, content strategy and AI behaviour analysis. Their value lies in diagnosing why content is invisible to generative engines, designing answer‑first structures and guiding organisations through new measurement frameworks. They demonstrate that optimisation is possible without “gaming” AI; the focus is on clarity, truth and authority. Brands that act early will shape how AI describes their category; those that delay will inherit a narrative written by competitors. In the evolving search landscape, the question is no longer whether AI matters, but whether your brand is part of the conversation.

Want to know whether ChatGPT, Perplexity, or Google AI Overviews mention your firm? Run a free first-party visibility audit on your domain in under a minute and see exactly which queries cite you and which do not.