Search engines now act like trusted counsellors instead of link collections: they interpret huge corpora of information, make decisions about what is credible and summarise it into natural‑language answers. Analysts estimate that more than two billion people view AI‑generated search overviews each month and, by late 2025, AI search has become a communication touchpoint for around 10 % of stakeholders globally. This means reputations are no longer shaped only by what ranks on Google — they are shaped by how large language models (LLMs) describe a brand. Generative engines compress complex reputations into a few sentences; outdated interviews or negative news can persist in AI summaries. As customers increasingly accept AI answers without visiting a site, reputational management must expand into this generative landscape.

What AI Reputation Management Actually Means

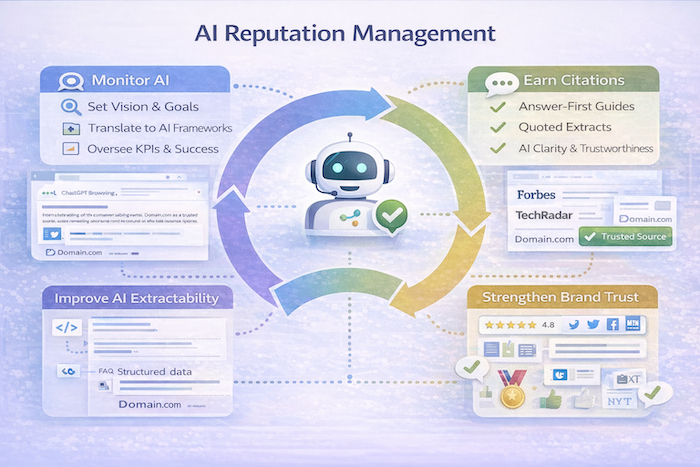

AI reputation management involves monitoring and influencing how brands, products and people are described, summarised and recommended by AI systems. Traditional online reputation management focused on search result pages, review sites and social media. By contrast, AI reputation management monitors the semantic narratives built by generative engines and seeks to ensure that an entity is represented accurately and neutrally. It requires understanding that AI models synthesise information from earned media, owned content and public conversations before any direct engagement. Because generative AI often acts as a mediator between companies and the world, influencing AI narratives becomes critical. An accurate AI summary can build trust before a user reaches a website, while an incorrect one can damage confidence.

Why AI Has Raised the Stakes

Compressed reputations

Generative engines summarise a brand’s entire footprint into concise text. Reputation becomes a condensed output: outdated quotes or negative articles can have an outsized impact because the AI may highlight them. In effect, AI creates a semantic rather than strictly informational landscape.

No context switching

Users rarely click to verify an AI response, so there is little chance to provide additional context. Misleading or incomplete summaries can therefore influence decisions.

Rapid scalability of errors

An inaccurate description can propagate instantly across multiple platforms (ChatGPT, Copilot, Google’s Search Generative Experience (SGE), Perplexity). Since generative engines reuse citations from high‑authority sources, a single misconception can cascade across multiple answers.

Strategic Reality Check

Generative AI does not reward spin. As Forbes observed, AI summarises everything about a brand or executive into a cohesive digital image. If marketing is built on exaggerations or weak claims, AI will notice and degrade trust. The era of manipulating search rankings through superficial tactics is over; reputation becomes an outcome of credibility and transparency. Brands must therefore focus on factual accuracy, authority and consistency rather than short‑term “optimisation tricks”.

Core AI Reputation Management Services

Monitoring AI outputs across platforms

Modern AI reputation services continuously test prompts and track how brands or executives are portrayed across ChatGPT, Bing Copilot, SGE‑style answers and other AI interfaces. Monitoring includes logging brand, product and leadership mentions and auditing the tone, accuracy and framing of AI‑generated responses. Reptrak notes that AI acts as both a communication channel and a stakeholder, synthesising earned, owned and public conversations; monitoring therefore covers news articles, social posts, and knowledge bases that feed these systems.

Identifying reputation risk in AI answers

A diagnostic audit looks for patterns such as:

- Incomplete or outdated descriptions (e.g., AI summarises a company based on old product lines).

- Over‑reliance on third‑party criticism or negative framing, which can result in self‑reinforcing negativity loops.

- Competitors being positioned as the default or “safer” options, implying that the brand lacks authority.

- Executives or founders missing entirely, which can occur if there is no accessible biographical information; this absence can lead AI to fill gaps with generic or inaccurate details.

Strategic content creation to influence AI narratives

AI reputation management emphasises publishing neutral, factual explainers that AI can reuse safely. This includes authoritative “about us”, “how it works” and leadership bio pages, as well as addressing common misconceptions proactively. TrizCom PR stresses that AI prioritises trusted third‑party validation and uses structured content to extract answers; thus, content should feature verifiable data, citations from independent sources and clear structure (headings, bullets, FAQs). Axia PR adds that PR professionals should publish earned media across credible outlets and regularly update public profiles, emphasising reputation‑strengthening keywords. Key messages must appear early because generative models often retrieve only snippets.

Executive and leadership presence in AI

AI platforms increasingly receive queries about CEOs, founders and senior leaders. Terakeet warns that AI search growth means executive reputation is under more scrutiny and that negative sentiment can become self‑reinforcing. To avoid “ghost executives”, brands should build credible executive profiles with authoritative bios, thought‑leadership articles and references in reputable media. Regularly updating these assets ensures AI has current and accurate data.

Third‑party validation as a reputation lever

Generative engines trust what others say about you more than what you say. TrizCom PR highlights that AI prioritises trusted third‑party sources, such as earned media, expert bylines, and industry publications, over brand‑owned content. Therefore, reputation strategies must shift from suppressing negatives to earning credible mentions. Securing coverage in reputable media, journals and high‑authority blogs provides evidence that AI models will cite; PR is no longer a vanity exercise but a necessary input into AI narratives.

Correcting and rebalancing AI narratives

When AI summaries are misleading but not malicious, the solution is not to “fight” the algorithm. Instead, organisations should publish clarifying content across their owned channels and encourage third‑party validations that update the training corpus. Terakeet notes that reputational work requires ongoing effort and visibility‑based metrics that track inclusion and sentiment. By strengthening authoritative signals and ensuring fresh content, AI models will gradually update their understanding.

AI reputation monitoring workflows

Effective AI reputation management develops prompt libraries for frequently asked questions such as “Is [Brand] trustworthy?” or “Who runs [Company]?” Monitoring involves:

- Testing across multiple engines to see how responses differ.

- Tracking tone and framing over time rather than reacting to a single answer.

- Logging patterns, especially recurring misconceptions or competitor over‑representation.

- Identifying new sources or outlets that may influence AI; for example, a widely shared blog post or new Wikipedia entry.

What AI reputation management is not

- Not manipulation or censorship – you cannot (and should not) “force” AI to remove legitimate criticism.

- Not guaranteed control – AI’s training data and retrieval methods evolve; the aim is to influence, not dictate.

- Not a replacement for fixing real business problems – reputational issues often stem from product, customer‑service or ethical shortcomings that must be addressed in the real world.

Who Needs AI Reputation Management Most

- Public‑facing brands and executives whose perception directly affects business outcomes.

- Industries where trust is critical, such as SaaS, fintech, health and legal services.

- Organisations experiencing stable demand but declining traffic, indicating that decisions are being made through AI summaries rather than clicks.

- Companies where AI recommendations influence purchase decisions, such as travel or consumer electronics.

Ethical and Long‑Term Considerations

AI reputation management must align with reality. Generative engines look for patterns of trust; short‑term tactics to inflate a brand can backfire, as AI models cross‑check data across multiple sources. Sustainable reputation management therefore requires accuracy, consistency and fairness. AI misinfo rates can increase if models ingest low‑quality or biased content; PR professionals must champion ethics by providing fact‑checked, transparent narratives. Over time, such an approach builds authentic trust with both customers and algorithms.

Integration with GEO and SEO

Video: The AI SEO Playbook for Brand Reputation (6 Proven Tactics)

AI reputation management works alongside Generative Engine Optimisation (GEO) and classic SEO:

- SEO ensures that a site’s pages are crawlable and indexable; without this foundation, AI cannot access the information needed to form narratives.

- GEO focuses on being selected and cited within generative answers; it shapes visibility and ensures the brand appears in AI results.

- AI reputation management shapes interpretation, focusing on what those generative answers say. Reptrak notes that AI synthesises earned, owned and public information; thus, content, media relations and PR must align with GEO strategies.

A comprehensive strategy integrates all three disciplines: SEO provides discoverability, GEO earns visibility and citations, and reputation management ensures credibility and trust in AI descriptions.

Conclusion

Generative AI is reshaping how reputation works. AI tools summarise a brand’s entire history and external signals into a few sentences that many users accept without question. AI reputation management therefore involves monitoring how generative systems portray your brand, identifying risks, creating factual and structured content, securing third‑party validation, and updating narratives over time. It is not about gaming algorithms but about aligning marketing with reality and building trust. Brands that invest in clarity, accuracy and authority will influence how AI speaks about them; those who ignore these changes will find their narratives shaped by others.