Search engine optimisation (SEO) has always involved discovering what algorithms value and aligning content accordingly. However, generative engine optimisation (GEO) extends beyond ranking pages: large language models (LLMs) summarise and paraphrase content, and users increasingly treat AI answers as authoritative. Because generative engines rephrase and mix sources, mistakes and manipulation can scale rapidly. Marketers therefore shoulder greater responsibility: influencing an AI’s training or retrieval sources can mislead millions of users.

Why ethics matter more in AI SEO than in traditional SEO

- Amplification of errors: LLMs generate new text based on patterns in training data. They can produce confident but false statements, sometimes citing nonexistent legal cases or repeating satire as fact. When AI snippets appear at the top of the page, users may assume neutrality and accuracy.

- Summarisation not just ranking: Generative engines extract and rephrase meaning‑dense points rather than simply ranking pages. Weak or inaccurate language may be rephrased incorrectly, changing the brand’s message.

- Bias and opacity: AI models learn from biased or incomplete data. Without careful oversight, they may reinforce historical inequities.

- Legal exposure: Courts have held companies liable for misinformation provided by chatbots; Air Canada was ordered to compensate a customer after its chatbot misrepresented bereavement fare policy.

Why AI SEO Raises New Ethical Risks

Generative engines summarise and rephrase, not just rank

Rather than sending traffic directly to pages, LLMs summarise diverse sources to answer questions. This reduces control over brand messaging and makes precise, factual content essential. Medium’s GEO primer warns that generative search could mix multiple sources and misrepresent brands if content is not clear. Because generative models only reference a limited number of sources, they prioritise well‑organised, meaning‑dense content over repetition.

Users may treat AI answers as neutral or authoritative

The Harvard Misinformation Review notes that people often accept AI‑generated explanations without recognising the underlying model. AI hallucinations can thus misinform large audiences. Brand reputation suffers when inaccuracies propagate, and businesses may be legally responsible for AI errors.

The temptation to “game” AI (and why it’s dangerous)

- Early signals of influence: Experiments suggest that repeating content across many sites can influence an LLM’s training data. Some marketers therefore consider creating content farms or injecting false information to bias AI responses.

- Parallels with black‑hat SEO: Black‑hat tactics like link farms previously exploited search rankings. Similar attempts to poison AI training data—injecting 250 malicious documents—can backdoor models and change their behaviour.

- Long‑term harm: Short‑term manipulation can yield citations but undermines trust. Search Engine Journal notes that poisoning AI systems for brand advantage is unethical and likely to backfire.

Black‑Hat AI SEO Tactics to Avoid

AI SEO is susceptible to the same unethical tactics that plagued early SEO, but their impact is magnified. Avoid:

- Flooding the web with low‑quality or false content: Mass‑produced AI content often violates search policies. Google states that using automation solely to manipulate rankings is considered “scaled content abuse”.

- Manufacturing fake authority or citations: Creating fake reviews or citations to game AI systems is unethical; Search Engine Land warns against generating fake reviews or manipulating data.

- Content farms designed to bias AI outputs: Coordinated repetition can poison training data; Anthropic’s research shows that as few as 250 malicious documents can backdoor a model.

- Rewriting competitors’ content: AI engines filter out semantic duplicates. “Rewritten” content becomes low‑value duplicate “AI slop,” and LLMs will penalise repetitive information.

Misinformation as an AI SEO Risk

Generative AI can hallucinate: it may invent legal cases or repeat satire as fact. Brands that seed inaccurate information risk:

- Repeating falsehoods: Hallucinations appear authoritative and can misinform customers.

- Accountability for AI errors: The Air Canada case shows companies are responsible for information published by chatbots.

- Legal, reputational and trust consequences: Customers may sue for damages, regulators may impose fines, and trust erodes quickly.

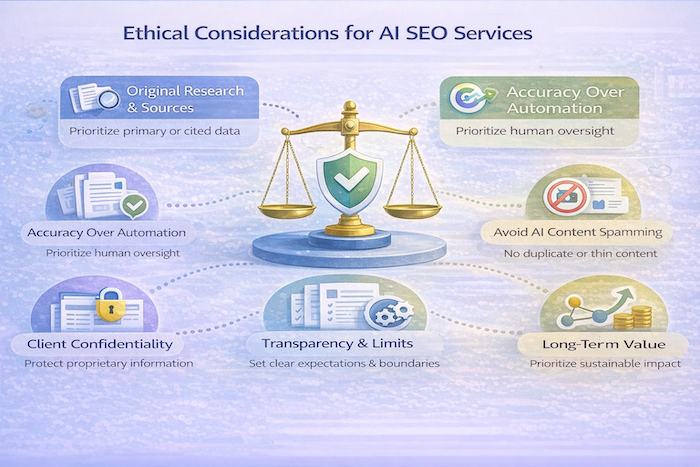

The Ethical Alternative: Accuracy Over Influence

Instead of trying to trick AI systems, ethical optimisation focuses on improving clarity and ensuring correct answers:

- Optimise for truth, not just mention: Encouraging AI to cite your site should stem from providing reliable, well‑structured information that answers questions accurately. LLMs prioritise meaning‑dense, organised content.

- Long‑term visibility depends on reliability: As generative models update, they down‑weight manipulated or low‑quality sources. Building a reputation for accuracy ensures citations persist through model updates.

Content Integrity and Originality

- Avoid plagiarism and respect intellectual property: AI‑rewritten stolen content remains unethical. The Sage Publishing guidelines require authors to disclose AI use, verify accuracy, and avoid plagiarism.

- Provide unique insights and data: AI engines filter duplicates. ClickRank’s 2026 guide notes that models seek diverse sources and de‑value “semantic duplicates,” rewarding original insights.

Responsible Use of AI Writing Tools

AI should support, not replace, human creators:

- Drafting assistant: Use AI to generate outlines or drafts but retain human judgment. Coggno’s responsible writing policies recommend using AI for defined use cases and requiring human review for all external content.

- Mandatory human review and fact‑checking: All AI‑generated text must undergo human verification to ensure accuracy and brand alignment.

- Editorial ownership: The organisation, not the AI tool, is accountable for the final content.

Transparency With Clients and Stakeholders

No promises of guaranteed AI placement

Clients may expect agencies to “get cited” by ChatGPT or other LLMs. Ethical practitioners must explain that AI visibility is probabilistic:

- Crawler access does not guarantee inclusion: Even if you allow AI crawlers, there is no guarantee your content will appear; conversely, blocking them may not fully prevent usage. SALT.agency notes that “blocking AI crawlers is not a guarantee that your content will be excluded. Allowing them is also no guarantee of visibility”.

- AI rules evolve: Generative models change frequently. Promising fixed placements is dishonest.

Communicate uncertainties and limits

- Explain data use and algorithms: Ethical marketing emphasises transparency. The MarTech Edge article recommends explaining how AI segments audiences and providing audit trails.

- Discuss data privacy and compliance: Clients should understand how their data is collected, stored and used. Transparency builds trust and meets regulatory requirements.

Ethics in AI Reputation and Narrative Management

AI outputs can influence reputation. Ethical practitioners should:

- Monitor and correct inaccuracies: Sogolytics’ reputation study urges businesses to monitor AI systems and correct inaccuracies. Produce factual content that addresses misrepresentations rather than suppressing criticism.

- Address real issues: If negative narratives reflect genuine problems, fixing underlying issues is more effective than attempting to manipulate AI outputs.

- Avoid narrative manipulation: Intentionally distorting AI responses (e.g., seeding false praise or burying criticism) risks legal and reputational harm.

Respecting AI Platform Policies and Content Usage Rules

- Understand crawler permissions and opt‑out mechanisms: AI crawlers like OpenAI’s GPTBot and Google‑Extended can be disallowed via robots.txt. However, robots.txt is voluntary; some AI crawlers have been caught ignoring it. Use disallow directives as a boundary rather than a guarantee.

- Don’t exploit loopholes: Attempting to trap or confuse AI crawlers or hide content violates platform terms and may provoke legal action. SALT.agency warns that attempts to manipulate AI crawlers or rely solely on technical blocks do not guarantee outcomes.

Why Ethical AI SEO Is Also the Most Effective Strategy

- AI down‑weights manipulation over time: Platforms continuously improve detection of low‑value or abusive content. Black‑hat tactics produce short‑lived gains and long‑term penalties.

- Trust compounds: Consumers value brands that are transparent and responsive. Sogolytics’ study reports that ensuring transparency and responding quickly builds credibility, while monitoring AI representation and correcting inaccuracies protects visibility.

- Accuracy survives model updates: Reliable, unique information remains relevant when models retrain. AI search prioritises trustworthy content and clear authorship.

Building an Ethical AI SEO Framework

An ethical AI SEO framework integrates human oversight, quality control and accountability:

- Define use cases and guidelines. Clearly outline when and how AI tools may be used. Prohibited uses should include generating content without human review, scraping competitor content, and misleading audiences.

- Quality thresholds for AI‑assisted output. Establish minimum standards for originality, factual accuracy and brand voice. Guidelines from KOanthic’s quality control guide advocate layered fact‑checking with automated tools and human verification, source validation, and temporal relevance checks. Maintain consistency in tone and style through brand voice monitoring.

- Regular audits for accuracy and bias. Conduct scheduled reviews of AI content and outcomes. Use automated analysis platforms to check grammar, readability, SEO compliance, plagiarism and factual accuracy, but always pair them with human oversight.

- Documentation and transparency. Create audit trails of AI decisions and ensure explainability. The MarTech Edge article recommends making AI processes explainable and auditable.

- Human‑AI collaboration workflow. Follow a multi‑stage editorial process: initial structure review, accuracy verification, style optimisation and final approval.

Agency and Consultant Responsibility

Marketing agencies and consultants wield significant influence over their clients’ digital presence. Ethical responsibility includes:

- Duty of care to clients and end users: Webolutions notes that when marketers integrate AI into customer journeys, they must protect user privacy, reduce bias and communicate transparently. Clients rely on professionals to safeguard their reputation.

- Saying “no” to unethical requests: Agencies should refuse to engage in tactics like content farming, fake reviews or poisoning AI models. They must educate clients about the risks and long‑term consequences of manipulative practices.

- Educating clients on risks and opportunities: Explain how generative engines work, the limits of control and the importance of trustworthy content. Transparency about probabilistic outcomes helps manage expectations.

Long‑Term Consequences of Unethical AI SEO

- Loss of brand credibility: Unethical tactics erode trust. Creaitor’s black‑hat SEO guide warns that shortcuts lead to search engine penalties, reputational harm, legal consequences and poor user experiences. Once customers perceive deception, regaining trust is difficult.

- Platform penalties or exclusion: Search engines and AI platforms actively combat manipulative practices. Offending sites can be demoted, excluded from results or banned from training data.

- Reputational damage outlasts traffic gains: Viral scandals spread quickly in an AI‑driven world; Sogolytics notes that recovery requires visible action and transparency. Negative AI summaries can linger, harming brand perception long after a temporary spike in visibility.

Conclusion

AI‑powered search is reshaping how people discover information. Ethical AI SEO is not optional; it is foundational. The goal is not to trick AI but to help it be right. By focusing on accuracy, originality and trust, businesses can achieve sustainable visibility in generative engines and build long‑term relationships with audiences. Ethical practices — transparent communication, rigorous fact‑checking, respect for intellectual property and platform policies, and clear human oversight — ensure that AI serves as an ally rather than a risk. As models evolve, those who prioritise ethics will remain visible, credible and resilient.

Want to know whether ChatGPT, Perplexity, or Google AI Overviews mention your firm? Run a free first-party visibility audit on your domain in under a minute and see exactly which queries cite you and which do not.