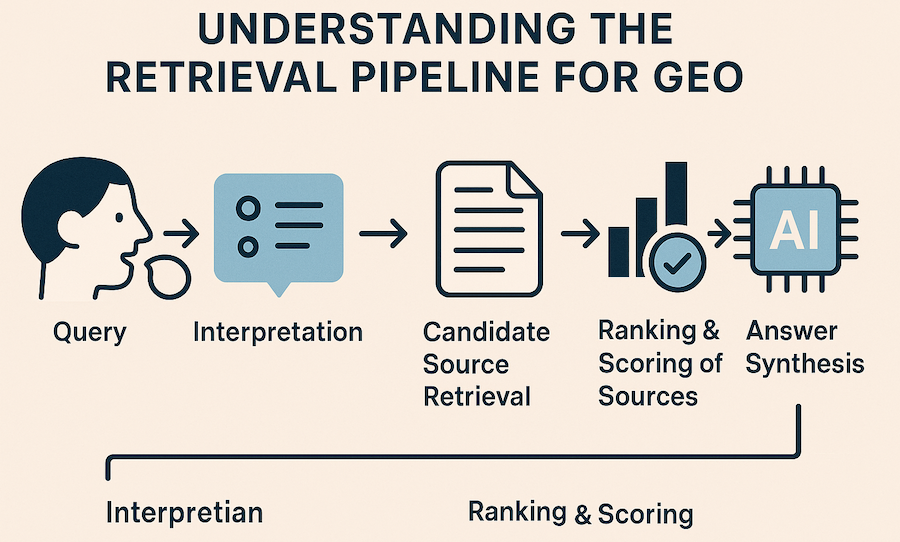

In the generative era of search, the engine at the centre of the experience is no longer a set of ranked links but an artificial intelligence system that synthesizes answers directly for the user. While this looks like magic to the searcher, it hides a complex sequence of steps that determine which sources are considered, how relevant they are, and how they are woven into a final response. That sequence is known as the retrieval pipeline, and it is the beating heart of Generative Engine Optimization (GEO). For brands and publishers, understanding this pipeline isn’t just an academic exercise — it is essential to staying visible when conversational assistants and generative search experiences replace traditional ten‑blue‑links results.

Traditional search optimization has always relied on understanding how algorithms crawl and rank websites. Now, the shift to generative engines requires a similar understanding of how these systems find and assemble information from across the web. Every answer generated by an AI — whether it’s ChatGPT summarizing a concept or Google’s Search Generative Experience providing a blended snippet — depends on which documents are pulled into context. Those documents depend on the retrieval pipeline. If your content isn’t compatible with the pipeline, it won’t be considered in the answer synthesis. On the other hand, if you structure and present information in ways that make it easy for retrieval models to find, score, and inject into an answer, you increase your chances of being cited.

This article unpacks each stage of the retrieval pipeline, from the moment a user issues a query to the final assembly of an AI‑generated answer. It explains why retrieval is different in generative systems compared with traditional search, details the mechanics of dense versus sparse retrieval, and explores how multiple sources are fused together. By the end, you’ll understand why retrieval is the hidden backbone of GEO and how to optimize your content for this new reality.

From Query to Interpretation

Every interaction with a generative engine begins with a query — a typed question, a spoken command, or even an uploaded document. While traditional search engines primarily focus on keyword matching, generative engines start by interpreting the user’s intent more holistically. This involves several sub‑steps:

Tokenization and Contextualization

The engine breaks the query into tokens — units that may represent words, subwords, or punctuation. Unlike early search algorithms that considered each word independently, modern AI systems embed these tokens in high‑dimensional vectors that capture their contextual meaning. When a user types “How does renewable energy impact carbon emissions?”, the model doesn’t just look for pages containing the exact phrase. Instead, it generates vector representations for “renewable,” “energy,” “impact,” “carbon,” and “emissions,” capturing their semantic relationships.

These vector embeddings allow the system to recognize that “green power” or “sustainable electricity” may be relevant even though those exact terms weren’t used. Embeddings are created using large language models trained on millions of examples, which enables them to capture subtle relationships such as synonyms, thematic similarity, and topical associations.

Intent Detection and Semantic Parsing

Once the query is embedded, the engine identifies what the user is trying to achieve. In traditional keyword search, user intent was often inferred from query length or patterns. Generative engines, however, employ intent detection models that classify queries into categories such as informational, navigational, transactional, or conversational. The models also examine parts of speech and syntactic structure to understand whether the user wants a definition, an opinion, a process, or a comparison.

For instance, “best hiking trails near me” is recognized as a location‑based list request, while “explain the process of photosynthesis” is a conceptual explanation request. In voice interactions, the system also considers natural language cues like tone, filler words, and context from previous turns. This semantic parsing ensures that downstream retrieval focuses on the right type of content.

Keyword Matching vs. Vector Embeddings

Traditional search engines rely on sparse retrieval, which uses bag‑of‑words representations and scoring algorithms like TF‑IDF or BM25. These systems treat each word independently and rank documents based on exact matches. Sparse methods are fast and precise, particularly when dealing with specific phrases or rare terms. However, they struggle with synonyms, paraphrasing, and nuanced meaning.

In contrast, dense retrieval uses the aforementioned vector embeddings to represent both queries and documents. Similarity is measured using distance metrics (such as cosine similarity or Euclidean distance) between these vectors. Dense methods excel at capturing semantic meaning; they can match “how to fix a dripping faucet” with content that describes “repairing a leaking tap.” Dense retrieval is computationally heavier, but advances in hardware and algorithms have made it practical for real‑time search.

Most generative engines use a hybrid approach, combining sparse and dense techniques. The hybrid model extracts documents that contain exact keyword matches while also retrieving semantically similar documents via vector search. This dual strategy ensures that the system captures both literal and contextual relevance, laying a robust foundation for the next stages of the retrieval pipeline.

Candidate Source Retrieval

Once the engine understands the query, it must gather a pool of candidate sources. Generative systems do not restrict themselves to traditional web pages. They search across multiple data repositories, including online articles, academic databases, forums, social media posts, proprietary knowledge bases, and sometimes private APIs (where the user has granted access). The objective at this stage is breadth: casting a wide net to find any material that could contribute to a comprehensive answer.

Dense vs. Sparse Retrieval in Practice

The system typically starts by generating query embeddings and searching a vector index. A vector index is a specialized database where each document is stored as a vector representing its semantic meaning. Efficient algorithms such as Hierarchical Navigable Small Worlds (HNSW) enable approximate nearest‑neighbor search, finding the documents whose embeddings lie closest to the query vector. Because embeddings capture context, this method often surfaces pages with similar themes even if they use different words.

Parallel to the vector search, a traditional keyword search engine uses algorithms like Okapi BM25 to find documents containing the exact keywords from the query. The keyword engine tokenizes documents, removes punctuation, and scores matches based on term frequency and inverse document frequency. This approach prioritizes precision — it’s especially useful when the query contains unique identifiers, technical terms, or brand names that might not be well represented in vector embeddings.

After separate searches, results from the dense and sparse systems are combined. Techniques like Reciprocal Rank Fusion (RRF) rescore documents by considering their positions in both lists. A document that ranks moderately well in both the dense and sparse lists can end up ahead of documents that rank extremely high in only one list. This blending improves overall recall and relevance, ensuring that the candidate pool captures both keyword precision and semantic breadth.

Filtering for Freshness, Authority, and Domain Relevance

Not all retrieved documents are equally valuable. Generative engines apply additional filters to refine the candidate pool before ranking. Freshness is assessed by checking publication dates, last‑modified timestamps, or other recency indicators. Queries about current events or rapidly evolving topics may prioritize more recent sources, whereas evergreen questions may tolerate older content.

Authority is evaluated using signals such as domain reputation, author credentials, citations from other trusted sites, and adherence to recognized editorial standards. Professional journal articles, governmental reports, and well‑cited encyclopaedia entries generally rank higher in authority than anonymous blog posts. Engines also consider domain relevance — a respected medical site is more authoritative for a health query than a general forum, even if the forum appears more frequently in search results.

Filtering also accounts for language, region, and safety. Content that matches the user’s language and geographic context is preferred, and harmful or unsafe content is excluded. By applying these filters, the engine constructs a cleaner, more trustworthy set of documents for final ranking.

Ranking and Scoring of Sources

With a refined set of candidate documents, the engine must decide how to order them. Ranking in generative systems goes beyond simple keyword scoring; it integrates multiple signals into a composite relevance score.

Relevance Scoring Using Embeddings and Similarity Metrics

In dense retrieval, similarity metrics measure the distance between the query embedding and each document embedding. Cosine similarity and Euclidean distance are common choices. If embeddings are normalized, these metrics produce equivalent rankings. By computing similarity scores, the system determines how closely each document’s meaning aligns with the user’s intent.

For keyword‑based documents, BM25 scores remain part of the picture. The hybrid system normalizes scores from both dense and sparse sources, ensuring they are comparable. The resulting relevance score reflects both explicit keyword matches and semantic alignment.

Incorporation of Trust Signals

Generative engines are designed to avoid hallucinating facts. To do this, they incorporate trust signals when ranking documents. Trust signals may include the presence of verifiable data (statistics, quotes, and citations), the use of structured markup (such as schema.org or JSON‑LD), clear attributions, and adherence to established editorial standards. These signals indicate that a document is reliable and that its facts can be cross‑checked.

Authority and topical expertise play into trust signals as well. Content produced by recognized experts, institutions, or governmental bodies may be weighted more heavily. Similarly, documents that use entity‑rich language — where people, places, products, and concepts are clearly named — are easier for the engine to parse and verify. By combining these trust signals with relevance scores, the engine can select sources that are not only relevant but also credible.

Balancing Precision and Recall

An effective retrieval system balances precision (ensuring that selected documents are closely aligned with the query) with recall (ensuring that no important documents are missed). Dense retrieval tends to maximize recall but may introduce loosely related content; sparse retrieval maximizes precision but can miss contextually relevant pieces. Blending these methods and applying trust signals yields a ranking that surfaces documents with the right mix of accuracy and breadth. This balanced ranking is crucial for producing a comprehensive and trustworthy answer.

Retrieval‑Augmented Generation (RAG) Explained

Once documents are ranked, they are passed to the generative model. This stage is known as Retrieval‑Augmented Generation (RAG), a technique that augments large language models with external context. RAG systems combine the strengths of retrieval and generation to produce answers that are both fluent and grounded in facts.

Step‑by‑Step Process

- Retrieval: As described above, the system gathers and ranks a set of relevant documents. Typically, only the top few documents (or even specific passages) are selected to keep the context manageable.

- Context Injection: The selected documents are concatenated with the user’s query and fed into the generative model’s context window. The prompt might include headings, citations, or metadata to help the model distinguish between different sources.

- Answer Synthesis: The language model reads the combined prompt and generates a response. Since the answer is conditioned on the retrieved documents, it can quote or paraphrase factual statements. The model also uses its internal knowledge to connect concepts and fill gaps, but the retrieved content anchors the answer in verifiable information.

Benefits of RAG

RAG offers several advantages over generation without retrieval. Grounding reduces hallucinations — the tendency of language models to fabricate details — because the model can reference real documents. Factual accuracy improves when the model can cite specific sources. RAG also expands coverage; by retrieving from up‑to‑date databases, the model can answer questions about recent events or niche topics that were not part of its training data. Finally, RAG allows domain adaptation without retraining the entire model; new documents can be indexed and surfaced in responses without fine‑tuning the language model itself.

Fusion of Multiple Sources

Generative engines rarely rely on a single source. Combining multiple documents enables them to cross‑validate information, fill in gaps, and provide richer responses. Fusion involves both selection and synthesis.

Aggregating Overlapping Facts

When different documents provide overlapping facts, the engine merges them. For example, if three articles state that a particular telescope launched in 1990, the engine can confidently include that date in the answer. Overlapping information acts as a reinforcement signal that the fact is widely agreed upon.

Handling Conflicting Information

Occasionally, sources disagree. Generative engines detect these conflicts by comparing assertions across documents. Strategies for handling conflict include: choosing the majority view if one viewpoint dominates; preferring higher‑authority sources (such as academic journals over blog posts); or presenting multiple perspectives with appropriate qualifiers (“some sources say… while others report…”). In cases of high uncertainty, engines may omit conflicting details altogether to avoid promoting incorrect information.

Why Single‑Source Reliance Is Rare

Relying on one document increases the risk of echoing errors or incomplete information. By integrating multiple sources, engines improve resilience and reduce bias. Fusion also allows the system to cover different facets of a complex query — for instance, combining a scientific explanation with a historical context and a practical example. This multi‑view approach leads to answers that are more comprehensive and trustworthy.

Examples of Retrieval Pipelines in Action

Generative search experiences are already available in a number of products. Observing how they operate offers insights into real‑world retrieval pipelines.

Google Search Generative Experience (SGE)

Google’s experimental Search Generative Experience inserts AI‑generated summaries above or alongside traditional results. When a user asks a complex question, SGE retrieves content from across the web, assembles a concise explanation, and provides clickable citations to the underlying sources. The citations reveal that multiple pages were consulted. While the precise algorithms are proprietary, SGE appears to blend vector search and keyword search, prioritizing pages with strong topical authority and clear answers. It also limits the length of the generated summary and surfaces follow‑up questions, encouraging deeper exploration.

Perplexity AI’s Source‑Linked Answers

Perplexity AI functions like a conversational search engine. After a user asks a question, Perplexity retrieves relevant pages, displays a succinct answer, and lists the sources used. The tool emphasizes transparency: each part of the answer is linked to a supporting document. It uses retrieval‑augmented generation to provide real‑time facts, and its pipeline ensures that the retrieved documents are current and authoritative. Users can click citations to read the original pages, reinforcing trust in the answer.

Bing Copilot’s Document Summarization Layer

Microsoft’s Bing Copilot (formerly Bing Chat) integrates search into a conversational assistant. It not only retrieves web documents but also summarises entire web pages or documents when requested. The pipeline first uses dense and sparse retrieval to find relevant content, then summarises the results using generative models. Citations are interwoven into the summary, and users can expand them to see the underlying text. Bing’s system also combines results from its proprietary index and partner APIs, illustrating the multi‑source nature of retrieval.

Challenges in Source Gathering

While retrieval pipelines are powerful, they face several practical challenges.

Latency and Computational Cost

Dense retrieval and hybrid search require significant computation, especially when searching large vector databases or performing re‑ranking. Approximate nearest‑neighbor algorithms like HNSW improve speed, but the need to combine results from multiple methods adds complexity. When a generative system must respond in near real‑time, it must balance retrieval depth with latency. Efficient index structures, caching, and hardware acceleration help mitigate these issues, but they remain ongoing challenges.

Bias Toward High‑Authority or High‑Traffic Sites

Authority and popularity signals can introduce bias. Well‑established domains with many citations are more likely to appear in the candidate pool, potentially crowding out smaller but equally informative sources. Generative systems must guard against blindly trusting high‑traffic sites, especially when addressing niche topics or emerging research where smaller publications may hold more accurate information.

Risk of Outdated or Manipulated Data

Retrieval engines rely on external content that can become outdated or be deliberately manipulated. If a widely cited page contains incorrect data, that error can propagate into generated answers. Similarly, spammers may attempt to game the system by creating pages filled with keywords or fabricated authority signals. To counter these risks, engines apply freshness filters, cross‑check facts across multiple sources, and monitor for known manipulation patterns. Continuous updates to the index and the ability to remove or down‑rank questionable sources are essential to maintain answer quality.

Implications for Generative Engine Optimization (GEO)

Understanding the retrieval pipeline illuminates why certain content surfaces in AI‑generated answers while other content remains invisible. It also highlights specific strategies for brands seeking to improve their visibility.

Structured, Factual, and Quotable Content

Because dense and sparse retrieval rely on both semantic meaning and exact phrasing, clarity matters. Use clear headings, concise sentences, and explicit naming of entities. When providing facts, present them in short, quotable statements that can be easily extracted by retrieval algorithms. Lists, tables, and definition boxes are particularly effective because they align with the snippet‑style answers many generative engines prefer.

Schema, Metadata, and Entity Clarity

Structured data markup (such as schema.org JSON‑LD) provides explicit context that search engines can parse. Marking up authorship, publication dates, product attributes, and FAQs helps retrieval systems identify the purpose and credibility of your content. Using clear language around entities — including scientific names, industry terms, and well‑defined labels — improves the accuracy of entity recognition and reduces ambiguity in vector embeddings. Metadata like alt text for images, captions for tables, and descriptive titles further guide the retrieval engine.

Future‑Proofing for Multi‑Modal Pipelines

Generative engines are evolving beyond text. Voice assistants incorporate speech recognition and natural language understanding; image‑based search uses computer vision and multimodal embeddings; video summarization extracts key information from audiovisual content. Content creators should consider multi‑modal optimization: provide transcripts for videos, captions for images, and audio descriptions where appropriate. Ensure that your brand’s information is consistent across mediums so that retrieval models can easily align and fuse data from various formats.

Conclusion

Retrieval may be invisible to users, but it is the backbone of every AI‑generated answer. From tokenizing and embedding queries to blending dense and sparse search, filtering candidates, re‑ranking results, and injecting context into a generative model, the retrieval pipeline decides which voices are heard in the generative era. Businesses that align their content with these mechanics — by focusing on clarity, structure, trust, and multi‑modal accessibility — will be better positioned to be cited in the answers that shape public understanding.

Generative Engine Optimization is not just about prompting large language models; it’s about anticipating and supporting the retrieval pipeline that feeds them. By making your information easy to find, evaluate, and quote, you ensure that your expertise remains visible in a world where users increasingly rely on AI to deliver the answers they need.

Want to know whether ChatGPT, Perplexity, or Google AI Overviews mention your firm? Run a free first-party visibility audit on your domain in under a minute and see exactly which queries cite you and which do not.